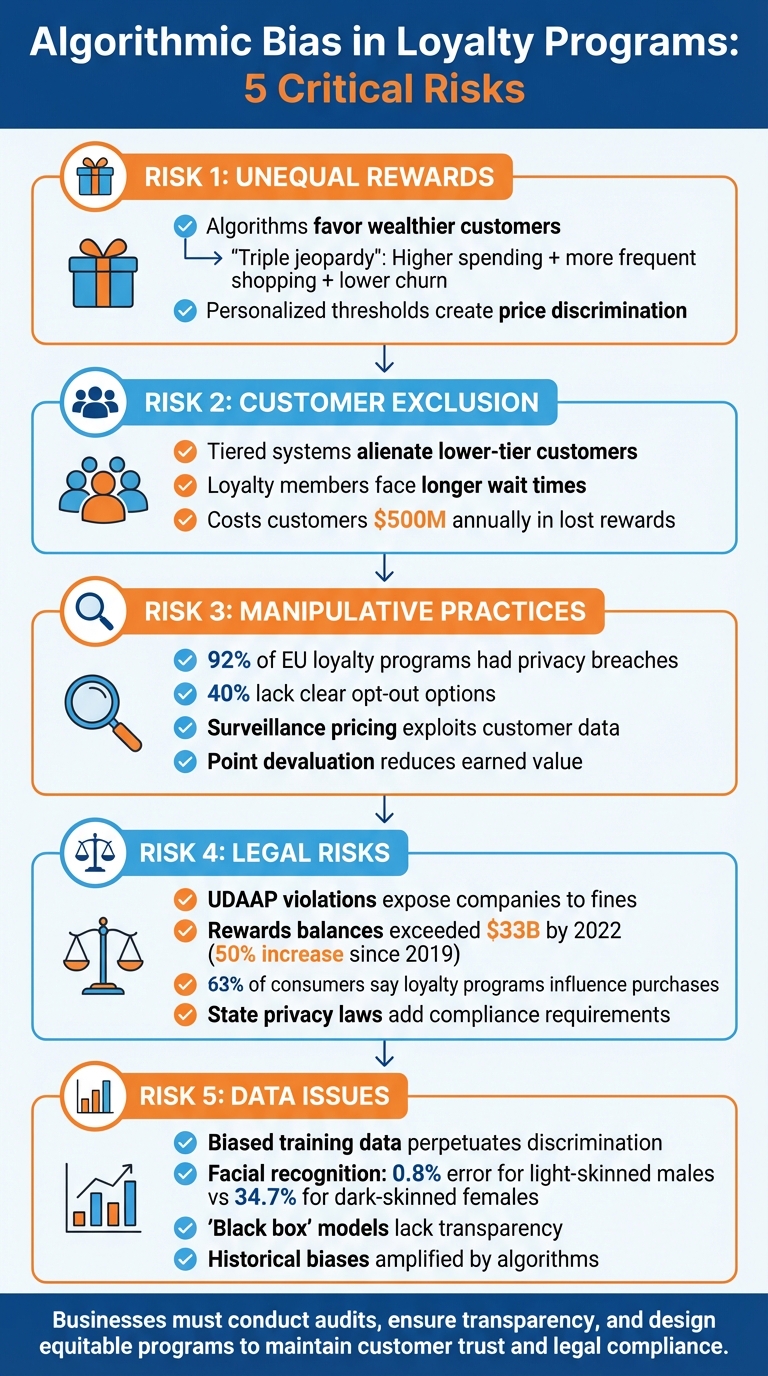

Algorithmic bias in loyalty programs can lead to unfair treatment of customers and significant challenges for businesses. Here’s what you need to know:

- Unequal Rewards: Algorithms often favor wealthier customers by prioritizing spending and churn metrics, creating disparities in reward distribution.

- Customer Exclusion: Tiered systems can alienate lower-tier customers, reducing trust and engagement.

- Manipulative Practices: Over-personalization, such as surveillance pricing and point devaluation, exploits customer data and erodes trust.

- Legal Risks: Biased algorithms may violate consumer protection laws, exposing companies to fines and reputation damage.

- Data Issues: Poor data quality and opaque models perpetuate bias and hinder fairness.

Businesses must address these risks through audits, transparent practices, and equitable top loyalty programs to maintain customer trust and compliance.

5 Key Risks of Algorithmic Bias in Loyalty Programs

1. Unequal Reward Distribution Across Customer Groups

AI-driven loyalty programs often create tiered systems that disproportionately benefit wealthier customers. By relying on metrics like Customer Lifetime Value (CLV), these algorithms tend to favor individuals who spend more and are less likely to churn. This results in what experts call a "triple jeopardy" situation – wealthier customers not only spend more, but they also shop more frequently and are less likely to leave the program. Algorithms amplify these advantages, widening the gap between affluent customers and others.

The issue becomes even more problematic when proxy variables unintentionally reinforce bias. While algorithms may avoid directly using sensitive data like race or gender, they can still rely on factors like zip codes or purchasing habits, which often correlate with protected characteristics. A notable example occurred in April 2016, when Amazon‘s same-day Prime delivery service excluded predominantly African-American neighborhoods in cities like Chicago, Dallas, and Newark. The algorithm prioritized zip codes with high concentrations of Prime members near warehouses, inadvertently creating a form of racial discrimination.

"If historical biases are factored into the model, it will make the same kinds of wrong judgments that people do." – Nicol Turner Lee, Director, Center for Technology Innovation

Another concern lies in personalized redemption thresholds. Algorithms often set varying thresholds for rewards redemption to maximize profits. While this strategy benefits revenue, it creates a form of price discrimination, forcing some groups to spend significantly more to access the same benefits. Studies show that using uniform redemption thresholds could reduce bias but might also result in a revenue loss of up to 1.69 times compared to personalized strategies.

The roots of bias go deeper, touching on data quality and the lack of diversity in those developing these systems. Technical biases arise when training data overrepresents or underrepresents certain demographics, leading algorithms to make flawed assumptions about entire groups. Compounding this issue is the fact that women hold only about 9% of senior IT leadership roles, limiting diverse perspectives that could help identify and address these biases early on. Despite such risks, a Deloitte survey found that 75% of executives remain confident in the fairness of their AI models, even though hidden biases continue to persist.

sbb-itb-94e1183

2. Unintended Customer Exclusion and Loss of Trust

Tiered loyalty programs, with their "silver", "gold", and "platinum" levels, often create service gaps that alienate certain customers. Algorithms driving these hierarchies tend to shift resources away from lower-tier customers, prioritizing program members instead. This approach not only reduces the benefits for non-participating customers but also increases the likelihood of broader service issues, leaving some customers feeling overlooked and undervalued.

The problem doesn’t stop at uneven rewards. Service failures can deeply damage trust. A May 2020 study revealed that loyalty program members often faced longer wait times and required more follow-ups to resolve issues compared to non-members. This added frustration, known as the "Boomerang Effect", illustrates how loyal customers experience greater emotional distress when they feel the brand has broken an unspoken emotional agreement.

"If you violate emotional trust, you might lose the customer forever." – Janey Whiteside, Chief Customer Officer, Walmart

Trust takes another hit when loyalty programs seem out of touch with their members. Policies like demanding original receipts, imposing return fees, or revoking rewards through unclear rules send mixed messages, undermining the sense of loyalty they aim to foster. This disconnect often leads to the loyalty paradox, where increasing program complexity actually decreases genuine engagement. Thomas Robertson, Academic Director of the Baker Retailing Center at Wharton, warns that these practices are akin to "killing their golden geese", as they disproportionately harm a brand’s most loyal and valuable customers.

To make matters worse, sharing anonymised behavioural data across industries can backfire on customers. Low scores in one area might lead to higher prices or fewer rewards in another. This practice collectively costs customers an estimated $500 million in lost rewards each year.

3. Manipulative Personalization Tactics

Personalization in AI-driven loyalty programs often walks a fine line between being helpful and becoming exploitative. While these programs promise tailored experiences, they can easily morph into tools that prioritize profit over customer well-being.

Many loyalty programs operate on a three-stage cycle – hook, hack, and hike – that shifts their focus from customer-friendly incentives to aggressive data collection and profit maximization. What starts as a seemingly beneficial program often turns into a system designed to harvest data and extract as much value as possible.

These programs track everything from cursor movements to search history and purchase behavior. The result? Detailed consumer profiles that allow companies to set individual maximum prices – a practice known as surveillance pricing. This approach shifts the goal from serving customers to exploiting them, raising serious privacy concerns.

An audit of 12 loyalty programs in the EU revealed alarming results: 92% had privacy breaches, and 40% failed to provide clear opt-out options for users.

"One big fail marketers make in personalized marketing is getting too granular with data – making campaigns creepy instead of relevant. It almost always backfires, killing engagement and trust." – Cade Collister, Metova

This quote highlights how excessive personalization can feel invasive, damaging both customer engagement and trust. When brands cross the line into overly specific targeting, what was meant to enhance the customer experience often ends up alienating them.

Stephanie T. Nguyen, Senior Fellow at Vanderbilt Policy Accelerator, also warns of the dangers of personalization:

"A tempting coupon at checkout can quickly become a path for companies to raise prices or rip off unsuspecting consumers. The growing prevalence of loyalty programs across our economy portends troubling tactics like AI-driven surveillance pricing."

The financial consequences for customers are significant. For instance, point devaluation – where companies experiment to find the "optimal" redemption thresholds – unfairly reduces the value of points customers have already earned. This tactic disproportionately benefits those who are already advantaged, further deepening existing inequalities in loyalty rewards.

The shift from personalization to manipulation isn’t just a breach of trust – it’s a direct hit to consumer rights and privacy.

4. Legal Compliance and Brand Reputation Risks

Loyalty algorithms that show bias can lead to serious legal trouble under UDAAP (Unfair, Deceptive, or Abusive Acts or Practices) and the Consumer Financial Protection Act. Federal regulators are increasingly treating loyalty points as part of consumers’ savings strategies. This means that unfair practices – like devaluing points or imposing unexpected restrictions – can result in enforcement actions. These legal challenges highlight broader operational risks that businesses must navigate.

Consider this: by 2022, rewards balances soared past $33 billion, with credits exceeding $40 billion – a staggering 50% jump since 2019. Yet, hidden terms and conditions cost consumers significant rewards every year. When loyalty algorithms use vague terms like "gaming" or "abuse" to revoke earned rewards, regulators may interpret these actions as deceptive bait-and-switch tactics.

"Large credit card issuers too often play a shell game to lure people into high-cost cards, boosting their own profits while denying consumers the rewards they’ve earned." – Rohit Chopra, Director, Consumer Financial Protection Bureau

Reputation is a delicate asset. Practices like point devaluation or surveillance pricing can quickly erode consumer trust. For some businesses – particularly airlines – their loyalty programs are often more valuable than the core business itself. With 63% of consumers reporting that loyalty programs influence their purchasing decisions, any hint of unfairness can directly hurt a company’s bottom line.

Companies must also account for the actions of their partners. If a technical issue or reward devaluation happens on a partner’s platform, the issuing company can still be held legally accountable. On top of this, state privacy laws in California and Colorado now regulate how loyalty program data is collected and shared, making compliance a top concern for state Attorneys General. To avoid legal pitfalls, all advertising and transactions tied to loyalty programs must be fair, truthful, and transparent.

5. Poor Data Quality and Lack of Model Transparency

High-quality data is the backbone of any effective loyalty algorithm. But when the data used for training is incomplete or skewed, the resulting models often fail to perform well, especially for underrepresented groups. Take facial recognition datasets as an example: these datasets are often over 75% male and 80% white. In one study of commercial facial recognition systems, error rates for light-skinned males were as low as 0.8%, but for dark-skinned females, they jumped to a staggering 34.7%.

Another major challenge comes from historical biases embedded in the data. Past prejudices – whether in hiring, lending, or other areas – can be unintentionally carried forward and even amplified by machine learning models. Neutral-seeming data points like zip codes or spending habits can perpetuate these biases. For instance, a healthcare algorithm that impacted 200 million patients used healthcare spending as a stand-in for medical need. The result? Black patients were identified for extra care at less than half the rate of others.

"Flawed data is a big problem… especially for the groups that businesses are working hard to protect." – Lucy Vasserman, Google

These data issues are compounded when models lack transparency. Many advanced predictive models used for loyalty programs rely on complex statistical techniques that are difficult for non-experts to interpret or audit. When these models operate as "black boxes", it becomes nearly impossible to trace the reasons behind biased outputs. This lack of clarity not only obscures the root causes of unfairness but also makes it harder to communicate effectively with customers, eroding trust in how rewards are allocated.

To tackle these challenges, businesses can adopt several strategies. Developing "bias impact statements" and applying inclusive design principles are key steps to ensure diverse representation in training data. Regular audits using open-source tools like Google’s What-If Tool, IBM’s AI Fairness 360, or Microsoft Fairlearn can help identify and address performance gaps across different demographic groups. Additionally, data fields should always be validated against a single, reliable "source of truth" before analysis begins.

Loyalty platforms like Meed prioritize rigorous data validation and transparency, enabling businesses to create fair and trustworthy loyalty programs that customers can rely on.

Conclusion

Bias in loyalty program algorithms can erode the very trust and loyalty they aim to build. When algorithms perpetuate existing prejudices or function as opaque "black boxes", customers often face unfair treatment, which can push them away.

Regulators are paying closer attention to loyalty programs under UDAAP and state privacy laws. Practices such as reducing the value of earned rewards or obscuring redemption restrictions not only harm customers but also expose brands to potential fines and reputational risks.

Beyond avoiding legal pitfalls, addressing bias is critical for maintaining operational fairness. Businesses need tools to simulate outcomes before models go live, audit error rates across diverse customer groups, and monitor program performance as new data becomes available. Adopting privacy-first principles and being transparent about how rewards are earned and redeemed can go a long way in fostering customer trust.

meed simplifies bias detection with tools like visual dashboards, scenario testing, and analytics, ensuring rewards are distributed fairly. Features such as QR code rewards, digital stamp cards, and wallet integration allow businesses to design loyalty programs that are both user-friendly and equitable – programs customers can rely on.

Building fairness and transparency into every step, from data collection to reward redemption, is essential for a loyalty program’s success. The best-performing programs can deliver up to three times the engagement of less effective ones and capture an average of 35 percentage points more of the customer wallet. Achieving this level of success depends on treating all customers fairly, operating with transparency, and consistently delivering equitable rewards.

FAQs

How can businesses create fair and unbiased AI-driven loyalty programs?

To create fair AI-driven loyalty programs, businesses need to tackle algorithmic bias head-on. Bias in algorithms can lead to uneven reward distribution, leaving some customers feeling undervalued or frustrated. A fair approach ensures rewards are distributed based on clear, merit-based criteria, treating all participants equally.

Achieving this starts with establishing ethical principles like transparency and non-discrimination. Using diverse and representative data is crucial to minimize bias, while regular audits of algorithms help catch and address any imbalances. Adding human oversight for critical decisions ensures a balanced approach, and compliance with privacy laws such as the CCPA and GDPR safeguards customer trust.

Platforms like meed can streamline these efforts by centralizing loyalty data. Features like digital stamp cards and QR-code rewards make it easier to track reward patterns, spot irregularities, and fine-tune algorithms. This proactive approach helps build a loyalty program that’s not only effective but also inclusive and trustworthy for every customer.

What legal challenges can arise from biased algorithms in loyalty programs?

Using biased algorithms in loyalty programs can put companies at serious legal risk, especially under U.S. consumer protection laws and the growing body of AI regulations. When algorithms unfairly favor or disadvantage certain customers – like granting fewer reward points or creating unclear redemption rules – they may violate laws designed to prevent unfair, deceptive, or abusive practices (UDAAP). This opens the door to regulatory actions, hefty fines, or even lawsuits.

Adding to the challenge, state-level laws like California’s upcoming AI regulations are raising the bar. These rules demand transparency in automated decision-making and strictly prohibit outcomes that could be discriminatory. Companies caught using biased algorithms could face financial penalties, be required to take corrective actions, and suffer damage to their reputation. To avoid these pitfalls, ensuring fairness and clarity in how loyalty program algorithms operate is not just important – it’s essential.

How does the quality of customer data affect the fairness of loyalty program algorithms?

The quality of customer data is a key factor in shaping loyalty program algorithms that treat customers fairly. When data is accurate, comprehensive, and current, algorithms can better recognize genuine purchasing behaviors, ensuring rewards are distributed in line with actual customer activity. This alignment helps loyalty programs reflect real-world habits rather than being skewed by incomplete or incorrect information.

On the flip side, poor-quality data – like outdated records or biased samples – can lead to unfair results. Some customers might end up with rewards they didn’t truly earn, while others could miss out unfairly. By keeping customer data clean and dependable, businesses can build loyalty programs that feel fair, reliable, and appealing to everyone involved.